2.2.1. Architecture

The Omni-Path interconnect delivers superior latency and bandwidth performance, making it an industry-leading fabric solution suitable for large-scale deployments.

Omni-Path introduces innovations designed for multi-generation scalability, including link-layer reliability, extended fabric addressing, and inter-generational compatibility. It emphasizes robust partitioning, advanced Quality of Service (QoS) features, and centralized fabric management.

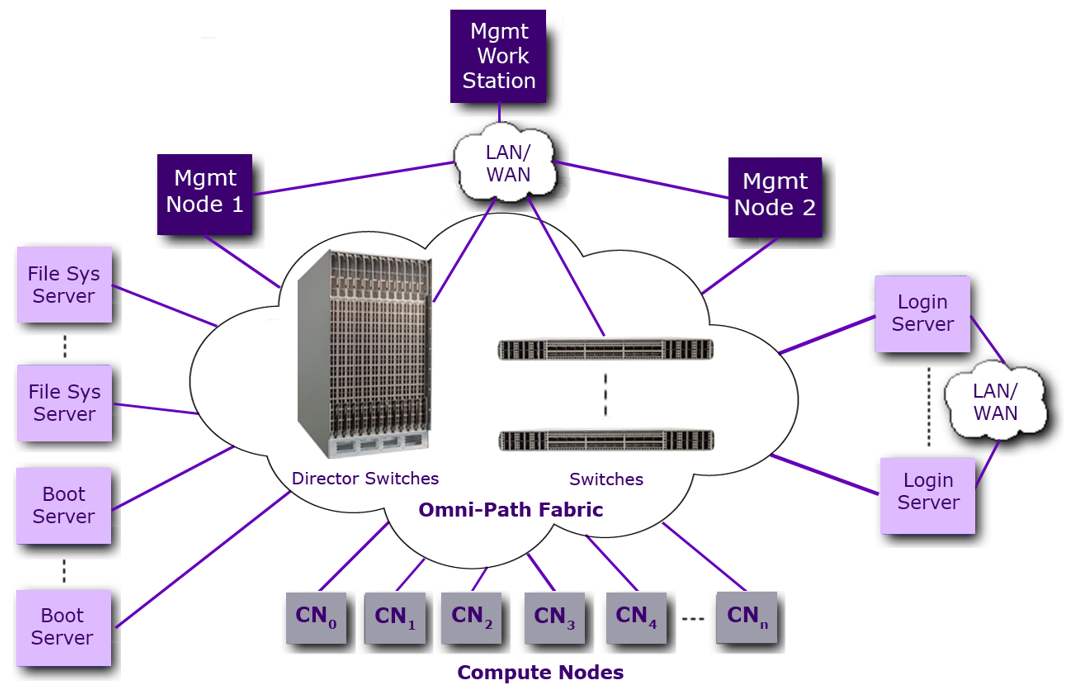

The following figure shows a simple Omni-Path-based fabric, consisting of different types of nodes and servers.

CN5000 Director Class Switch is shown as part of the general Omni-Path Fabric example

Fabric reliability is enhanced by combining link level retry typical of HPC fabrics with end-to-end retry typical of traditional networks. Layer 2 network addressing is extended for very large-scale systems.

The software ecosystem includes the following key APIs:

OpenFabrics Interface (OFI) represents the strategic direction for high-performance, user-level, and kernel-level network APIs.

Verbs provide support for existing remote direct memory access (RDMA) applications and include extensions to support Omni-Path fabric management.

Sockets are supported through IPoIB and rSockets interfaces. This permits many existing applications to immediately run on the Omni-Path Fabric as well as providing TCP/IP features such as IP routing and network bonding.

Higher-level communication libraries, such as Message Passing Interface (MPI) libraries, are layered on top of these low-level APIs. This permits existing HPC applications to immediately take advantage of advanced CN5000 Omni-Path features.

Omni-Path integrates CN5000 SuperNICs, switches, and comprehensive fabric management and development tools into a complete end-to-end solution.

2.2.1.1. CN5000 SuperNIC

Hosts connect to the fabric through SuperNICs, which translate instructions between the host processor and the fabric. SuperNICs implement the physical and link layer logic required for nodes to attach to the fabric and exchange packets with other servers or devices. Additionally, SuperNICs contain specialized logic to execute and accelerate upper-layer protocols.

2.2.1.2. CN5000 Omni-Path Switches

CN5000 Omni-Path Switches operate at OSI Layer 2 (link layer) and function as packet-forwarding devices within the CN5000 Omni-Path Fabric. They implement QoS features such as virtual lanes, congestion management, and adaptive routing. Each switch includes a management agent and is centrally managed by Fabric Manager software. Centralized management involves configuring switch forwarding tables to establish specific fabric topologies, setting QoS and security parameters, and providing alternate routes for adaptive routing.

2.2.1.3. Omni-Path Management

The CN5000 Omni-Path Fabric supports redundant Fabric Managers, which centrally manage all fabric devices (servers and switches) through associated management agents. During fabric initialization, a primary Fabric Manager is selected from the CN5000 Omni-Path Fabric Software components.

The primary Fabric Manager is responsible for:

Discovering the fabric topology.

Setting up fabric addressing and other necessary values needed for operating the fabric.

Creating and populating the Switch forwarding tables.

Maintaining the Fabric Management Database.

Monitoring fabric utilization, performance, and statistics.

Management packets from the Primary Fabric Manager are transmitted in-band over the same physical wires as regular data packets, using dedicated buffers on a specific virtual lane (VL15). Built-in request/response retry mechanisms ensure detection and recovery of lost management packets.